Why Cyber Risk Quantification Is the New ‘Best Practice’

Written by: Black Kite

By Haley Williams, from the perspective of Black Kite CSO, Bob Maley

When I initially started building the cyber security program at PayPal, naturally the first thing I did was research best practices. While I was certified as a TPRM professional, I started to learn the ins and outs of expected and typical practices as I was building the program.

What I built, when benchmarked against the standard of “best practices”, was amazing. But when I took a step back and watched the program function in reality, those best practices just weren’t cutting it for successful third party risk management.

Consult the Cyber Risk Research Experts

Reports, metrics and statistics regarding the current risk landscape tell an extremely powerful story of risk steadily increasing year after year. If you look at the 2022 IBM Cost of a Data Breach Report, as well as the 2022 Black Kite Cost of a Data Breach Report, you can see that costs are still going up, despite the push of these best practices. In fact, according to IBM, the average cost of a data breach in 2022 was $4.35 million, and the number of attacks is increasing. According to Black Kite, ransomware had an average cost per incident of $22.18 million from 2017-2022.

Impacts of a breach aren’t limited to the year of attack either. “The true cost of a data breach is often not fully realized immediately following the event. Even after a few months, the full scope of the damage may not yet be understood,” Black Kite head of research Ferhat Dikbiyik wrote in the report. “Companies have to deal with the consequences in the eyes of regulators, courts, and civil society for years.”

Comparing Classification-Based Risk Management to methods using Residual Risk

Are the current methods of third-party defense sufficient?

If the number of victims are going up and impact isn’t slowing down, what are our current methods of defense doing to redirect the current narrative? The reports are telling us that the best practices are not working in typical vendor risk management. It’s not that the threat actors are suddenly brilliant or finding new tactics – it’s that they’ve realized that these companies with basic programs are easy targets.

Generally, best practices are classification based. They are overwhelming, difficult to manage, and ineffective. People tend to want to start with classification-based models, with tier 1, 2, and 3 vendors, etc. The fundamental flaw with this is the way vendors are categorized.

Inherent Risk vs Residual Risk

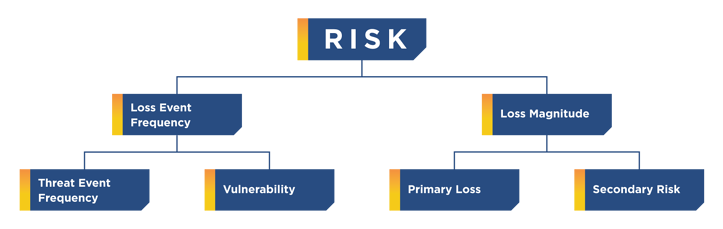

This classification-based method uses inherent risk: teams determine what the inherent risk of a vendor is by determining what would be the impact if no controls existed. You would need to then use that risk and apply controls to get the resulting residual risk.

According to the FAIR institute, inherent risk represents the amount of risk that exists in the absence of controls. Residual risk is the amount of risk that remains after controls are accounted for. “The flaw with inherent risk is that in most cases, when used in practice, it does not explicitly consider which controls are being included or excluded. A truly inherent risk state, in our example, would assume no employee background checks or interviews are conducted and that no locks exist on any doors. This could lead to almost any risk scenario being evaluated as inherently high. Treating inherent risk therefore can be quite arbitrary.”

The Problem with Methods Monitoring Inherent Risk

1. Risk in this model is not clearly defined.

Risk should be determined using a real probabilistic study to determine the complete financial impact.

Let’s say a company has a database of 100 million customers. Within that database, you have credit card information, email addresses, physical addresses, and other PII. If that database were breached, the inherent risk (no controls) and cost of a breach of that size would be enormous. So your inherent risk is enormous.

However, you have several controls in place that reduce that risk, and that gives you the residual risk. While this is a good thought process, in reality, it doesn’t work too well. Instead of looking at it that way, you have to look at the entire cyber risk environment.

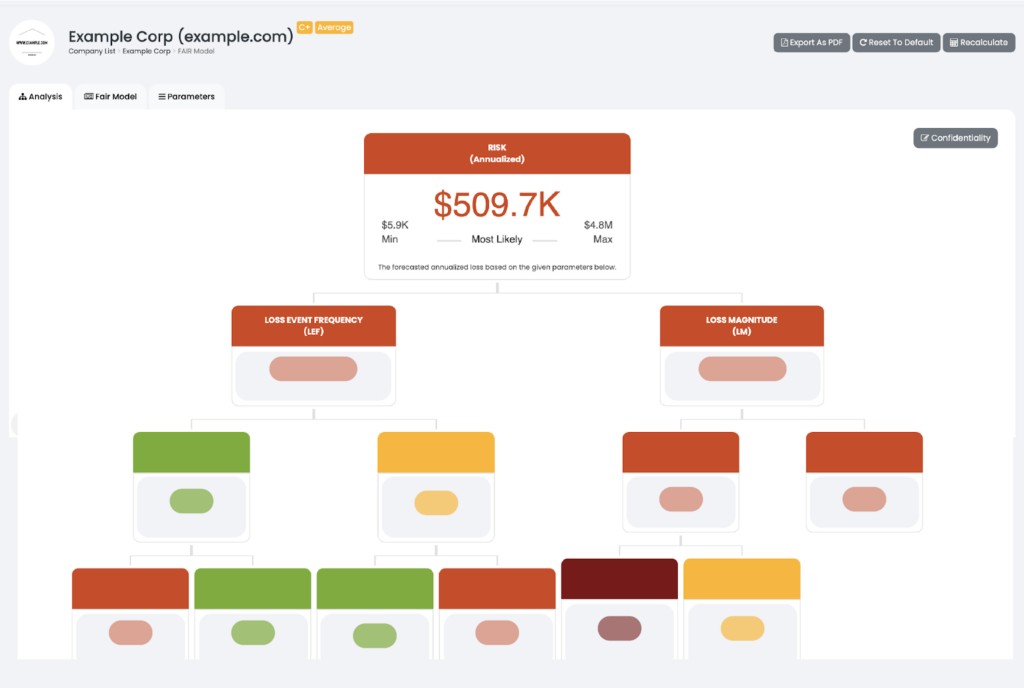

No company with 100 million customers has zero controls in place. A better way to look at the situation is: what is the risk that lives today? What is the value of that asset? Look at all of the controls in place and figure out the weakness/effectiveness of those controls. Complete factor analyses and risk assessments, while taking into account both effectiveness and value. Just because a control is in place doesn’t mean it’s effective. You can successfully do this by using the FAIR™ methodology.

Best Process to Handling Third Party Risk Management

How does a FAIR™ assessment properly measure risk?

A FAIR™ assessment takes into account effectiveness of controls, but also other factors such as frequency of attack or compromise. With this information, you have a true, accurate look at what that risk means for you and your company specifically.

According to the FAIR Institute, “FAIR’s risk model components are specifically designed to support risk quantification:

- A standard taxonomy and ontology for information and operational risk.

- A framework for establishing data collection criteria.

- Measurement scales for risk factors.

- A modeling construct for analyzing complex risk scenarios.

- Integration into computational engines such as RiskLens for calculating risk.”

Now, you can start building the rest of your third party risk management program. Not based on tiered vendors, but based on those that have the highest probable financial impact.

What does this method solve?

- Gets rid of the churn.

- Gets rid of wasted effort caused by inherent risk.

- Halts the build of a classification based system.

Want to see FAIR in action?

Most CFOs agree a real-time financial data model is critical to enable better business decisions, forecasting models and data accuracy. Black Kite automatically provides real-time financial insights as a part of a third party risk program to help organizations maintain an acceptable level of loss exposure and sufficient cyber security posture.

Claim your FREE FAIR™ Report